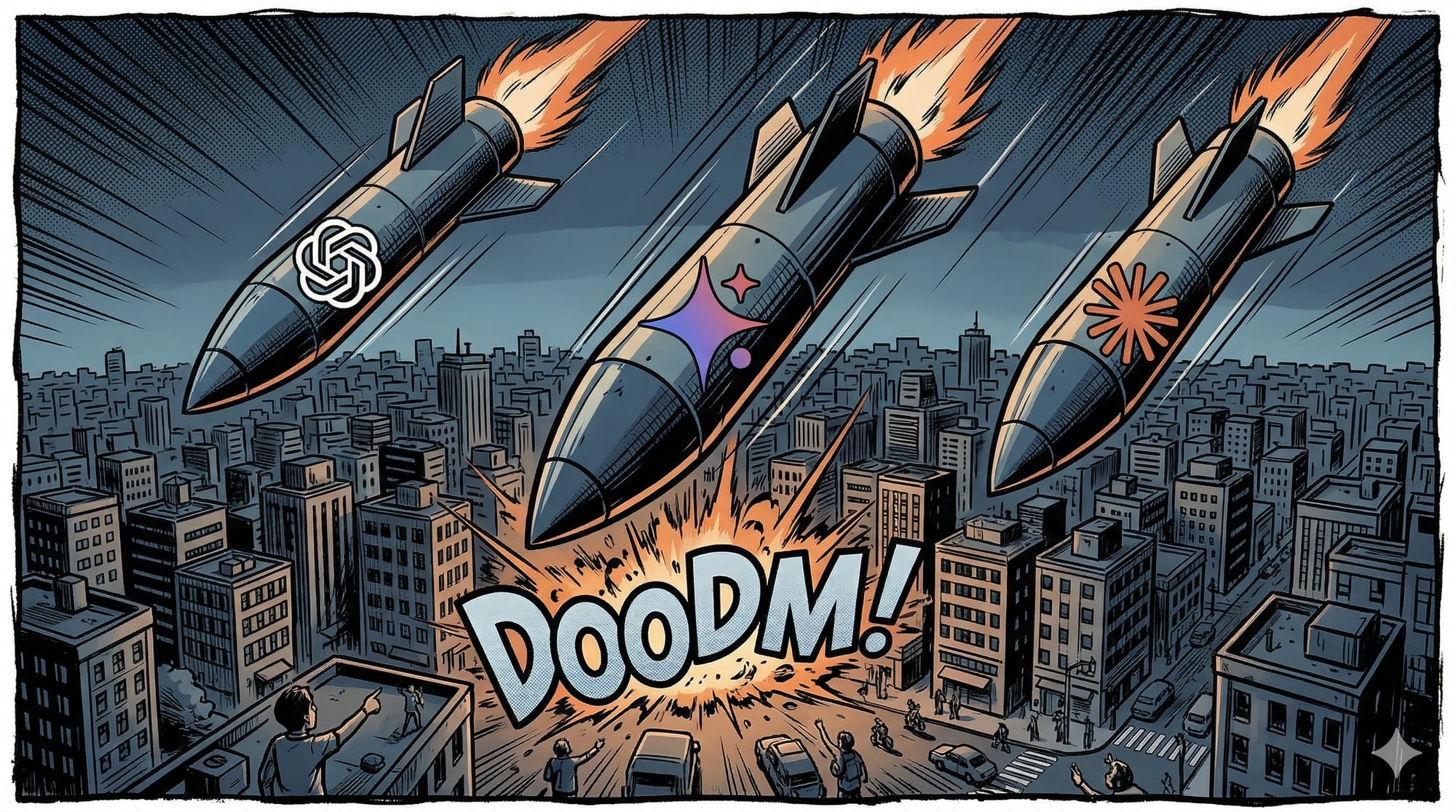

A Cynic's Guide to Choosing Your AI Poison

Or: How I Learned to Stop Worrying and Love the Military-Industrial Complex

You've finally had enough.

Maybe it was Sam Altman's weird iris-scanning orb thing. Maybe it was the boardroom coup that felt like a Netflix drama written by people who've never spoken to another human. Maybe it was OpenAI quietly dissolving its Superalignment team in late 2025 while still tweeting about "Democratic AI." Whatever the final straw was, you're done.

Congratulations. You've decided to move away from ChatGPT. Now for the bad news: there's nowhere clean to go.

I'm not being dramatic. I've spent the last several weeks digging through contracts, policy changes, employee revolt letters, and defense procurement documents. And what I found is that every single frontier AI lab—Google, Anthropic, and yes, even the European darling Mistral—has already sold out. Not metaphorically. Literally. To militaries.

The Great Unraveling

Let's start with a simple timeline, because the speed of this collapse is important to understand.

- 2018: Google publishes its AI Principles, explicitly pledging not to build AI weapons. This followed massive employee protests over Project Maven, a Pentagon drone contract.

- January 2024: OpenAI quietly removes language prohibiting "military and warfare" use from its terms of service.

- Late 2024: The U.S. Army begins testing generative AI for tactical decision-making and maintenance logs.

- February 2025: Anthropic CEO Dario Amodei refuses to remove safeguards on autonomous weapons and mass surveillance. The Pentagon subsequently blacklists Anthropic.

- January 2026: Mistral signs a framework agreement with the French Armed Forces Ministry. Google also rolls out Gemini AI agents for the Pentagon.

The Dirty Three

Google: The Quiet Betrayal

In 2018, Google faced a revolt when 4,000 employees protested its drone work. Fast forward to 2026: Google is a key provider for the Joint Warfighting Cloud Capability (JWCC) contract. Their "AI Principles" still exist, but they are now interpreted through the lens of "National Security," which allows for defensive and logistics support—a grey area that has widened significantly.

Anthropic: The Martyr Complex

Anthropic’s brand is "Safety," but they have leaned into the "Democratic Values" argument. By partnering with Palantir and Amazon, they argue it is safer for their ethical AI to be used by the military than a competitor's.

- The Reality: Claude achieved Impact Level 6 (IL6) accreditation—the highest security level for classified defense data—before being blacklisted in early 2026 over refusal to allow autonomous weapon use.

OpenAI: The Performative Grift

OpenAI has moved the furthest from its non-profit roots. By forming a "National Security" team and hiring former NSA directors to its board, they have signaled that AGI is a matter of state power. While they claim to share "red line" positions on autonomous weapons, they haven't walked away from the massive government contracts funneling ChatGPT into the Pentagon's GenAI.mil platform.

But What About Europe?

Mistral's Defense Entanglements

Mistral is often touted as the privacy-centric European alternative. It’s not. In January 2026, Mistral AI signed a defense deal with the French Armed Forces Ministry giving military units and research institutions access to their models.

- The Partnership: Mistral has also collaborated with Helsing, a defense AI company, to integrate models into European systems like the Future Combat Air System (FCAS).

The AGI Lie

The AGI narrative serves exactly one purpose: to justify the capital expenditure. "We need $100 billion for compute." But pull that thread and what's actually happening is: a handful of companies are building centralized, proprietary, surveillance-based intelligence infrastructure. The "curing diseases" line was always humane washing. The real product is selling intelligence as a service to the highest bidder—and right now, those bidders are defense departments.

Where That Leaves You

| If you go to... | You get... | But also... |

|---|---|---|

| Gemini | Good integration. | Deeply integrated into the Pentagon's AI ecosystem. |

| Claude | High reasoning. | IL6 accredited for classified work; currently blacklisted by DoD. |

| Mistral | Sovereign AI. | Bound by French military framework agreements. |

| Open-source | Truly private. | The only "clean" path, but requires your own hardware. |

Part Six: The Only Honest Conclusion

I'm not going to tell you to stop using AI. But I am going to tell you to stop believing the marketing. Every dollar you give these labs helps train the next artillery guidance system or drone targeting algorithm. If you have the technical ability, run open-source models locally. They're more work, but they don't come with a military contract attached.

The race to AGI was always a distraction. The real race was about who gets to build the weapons. And everyone lost.