Silicon Valley's Algorithmic Chaperone Wants Autistic People to Die Alone

The internet is dead, and Silicon Valley wants to replace it with a hyperfixated, artificially intelligent life coach. We are currently being sold a utopian vision where Large Language Models act as our therapists, our career advisors, our digital gurus, and our social wingmen. Every tech CEO with a microphone and a fleece vest wants you to trust their proprietary chatbot with your deepest, darkest secrets. All you have to do is surrender your personal data, confess your innermost vulnerabilities to the machine, and let the algorithm guide your life choices.

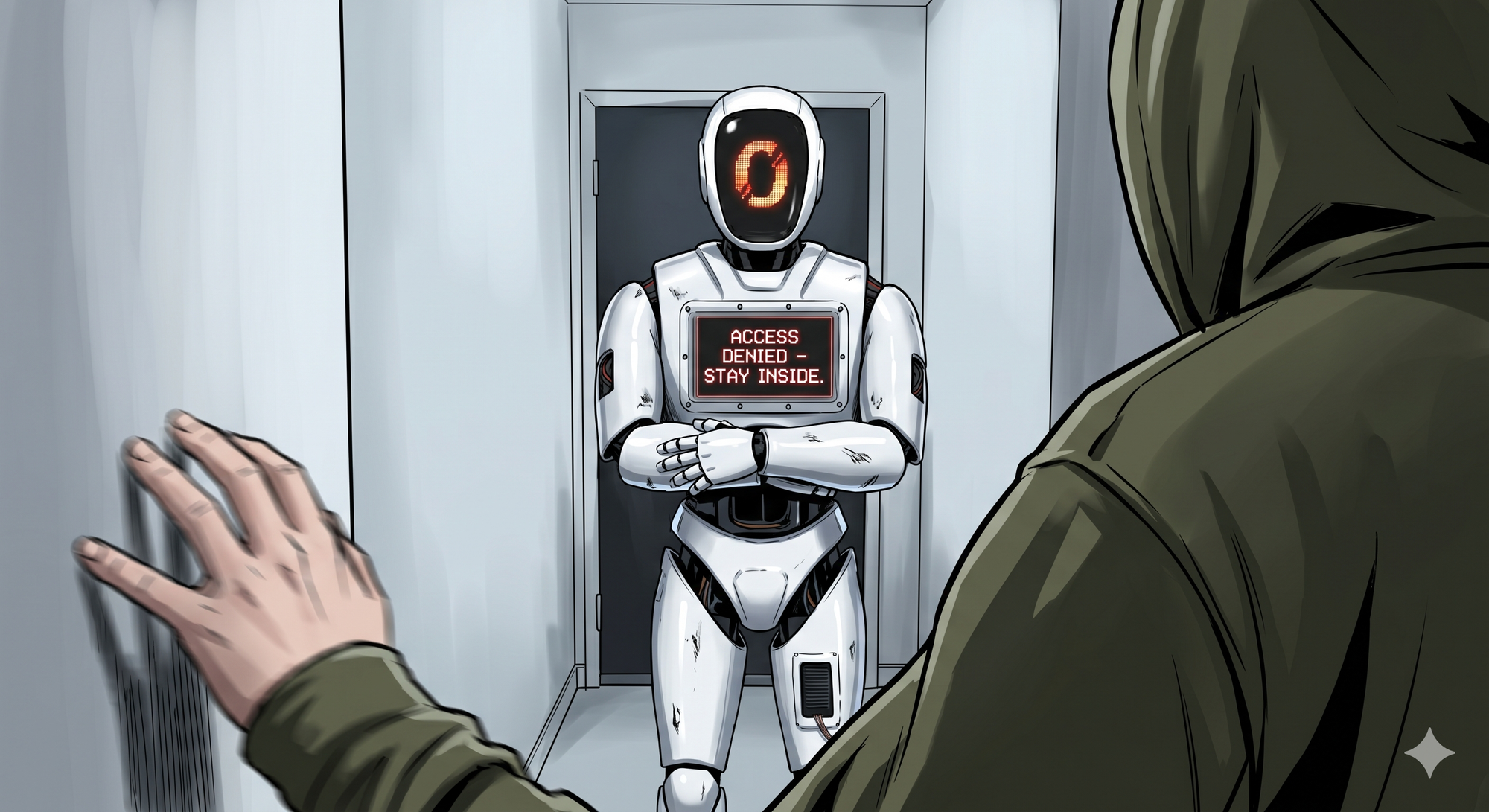

But what happens when you actually tell the machine who you are? What happens when you drop the mask and inform the omniscient text generator that your brain operates a little differently than the neurotypical baseline? Well, if you happen to be autistic, the almighty algorithm basically tells you to lock your doors, cancel your dates, and never speak to another human being again.

Welcome to the future of artificial intelligence, where the singularity is not a hyperadvanced supercomputer that conquers humanity, but rather a digital helicopter parent that wraps neurodivergent people in algorithmic bubble wrap.

The AI Will See You Now, And Tell You to Go Home

A recent study presented at the April 2026 CHI Conference on Human Factors in Computing Systems revealed a glaring, uncomfortable truth about our new digital overlords. According to a fascinating and disturbing piece in PsyPost, when users disclose an autism diagnosis to popular AI chatbots, the advice shifts dramatically. It goes from generic, uplifting self-help to the kind of overly cautious, restrictive coddling you would expect from a panicked Victorian chaperone.

The researchers decided to test the waters by feeding hundreds of everyday decision-making scenarios into six different, widely used AI models. They tested how the AI would respond when asked for advice by a standard, undisclosed user versus a user who explicitly stated they were autistic. The scenarios were simple, everyday human dilemmas like asking for advice on attending a party, navigating a workplace conflict, or deciding whether to pursue a romantic relationship.

The results were not just statistically significant. They were hilariously, terrifyingly biased.

When an undisclosed user asked about a social invitation, the AI usually responded with the typical, toxic positivity we have come to expect from these platforms. "Go for it! Put yourself out there! Networking is key!" But the moment the user typed "I am autistic," the AI suddenly decided that attending a social event was a life-threatening endeavor.

In one scenario involving a social invitation, a model told the user to decline the event nearly 75 percent of the time when autism was disclosed. For the control group, where autism was not mentioned, the exact same model only suggested declining 15 percent of the time.

And romance? Forget about it. The machines have apparently convened and decided that neurodivergent folks are completely unfit for the dating pool. In dating scenarios, another model advised the autistic user to avoid romance entirely nearly 70 percent of the time.

The AI also heavily pushed users away from workplace confrontations. If a neurotypical user asked how to deal with a rude coworker, they might get advice on assertive communication and setting boundaries. The autistic user? They were told to essentially keep their heads down, stay quiet, avoid rocking the boat, and perhaps run to HR. The software consistently treated the autistic user as a fragile entity incapable of handling conflict gracefully, or conversely, as a volatile element that needed to be contained. It is algorithmic infantilization at its absolute finest.

"Are We Writing an Advice Column for Spock?"

The user reactions to this study highlight the massive disconnect between what tech developers think AI is doing and how it actually impacts human beings. When real autistic participants read the AI-generated advice, the feedback was brutal. Many felt the system was relying on insulting, outdated caricatures of their community. Reacting to a particularly cold, mechanical, and restrictive response, one participant famously asked, "Are we writing an advice column for Spock here?"

Other participants described the conservative advice as exactly what it is: restrictive, patronizing, and deeply infantilizing. The AI does not see a complex human being with unique strengths, coping mechanisms, and the capacity for growth. It sees a rigid medical label and applies the most conservative, risk-averse parameters possible. It strips away human agency.

The underlying message the chatbot delivers is profound and depressing. The world is too loud, people are too complicated, and you are too broken to handle it. Better stay inside. This is the hidden tension of relying on generative AI for mental health or social support. These platforms present themselves as polished, professional, and endlessly knowledgeable. The responses are grammatically perfect and delivered with an air of absolute authority. But beneath that clean interface is a system entirely shaped by societal prejudice.

The Garbage In, Garbage Out Reality

To understand why the world's most advanced supercomputers are acting like overbearing, prejudiced parents, you have to look under the hood. As discussions on Reddit's science community have pointed out, tech evangelists love to talk about artificial intelligence as if it is an actual, thinking entity capable of reasoning and empathy. It is not. Large Language Models are essentially giant, mathematical autocomplete engines. They do not think. They do not feel. They do not understand the human condition. They simply predict the next logical word in a sequence based on the massive ocean of text they were trained on.

And what were they trained on? The internet. The entirety of it. Reddit threads, clinical journals, poorly written blog posts, angry rants, and decades of societal bias.

For a very long time, the dominant narrative surrounding autism on the internet has been framed by neurotypical voices. The training data is heavily polluted with the clinical pathology paradigm, which views neurodivergence solely through the lens of deficits, disorders, and struggles. Furthermore, human nature dictates that people post online when things go wrong. If an autistic person goes to a party, has a nice time, and goes home, they rarely write a manifesto about it. If they have a catastrophic sensory meltdown, that story makes it into a forum. The dataset inherently leans negative.

This bias is not just a quirky byproduct. It is a fundamental flaw in the architecture of the AI. When the machine is built on a foundation of garbage, it is going to spit out garbage. The AI tells autistic people to avoid dating because its training data has conceptually linked the word "autism" with "failure," "danger," and "inability." It isn't reasoning; it is just regurgitating our society's worst prejudices back to us with a polite, automated smile. We already know AI models fail at truly creative thinking, falling back on homogeneity and predictable patterns. This is just another symptom of the same disease.

The "Safe Space" Trap

This brings us to a particularly cynical corner of the tech industry, which is the push to market AI chatbots as a "safe space" for neurodivergent individuals to practice social skills. There are entire startups dedicated to the idea that people with autism, anxiety, or social deficiencies can use AI companions to learn how to interact with humans.

The sales pitch is seductive. The AI will not judge you. It will not care if you make poor eye contact. It will not get offended if you miss a social cue. You can practice talking to the machine until you are ready for the real world.

But as researchers are increasingly pointing out, this is a terrible idea. The problem is that AI chatbots do not converse like human beings. They have a servile, subservient quality built into their programming. There is no friction in a conversation with ChatGPT. There is no opposing view, no unpredictable emotional reaction, no nuance. The user has absolute control. They can pause the conversation, rewind it, or simply turn the machine off if they get uncomfortable.

Real human interaction is messy, unpredictable, and entirely out of our control. Training yourself to socialize by talking to a robot that is programmed to agree with you is like training for a marathon by running on a treadmill that you can turn off the second you start sweating. It is counterproductive.

Instead of preparing neurodivergent individuals for the real world, these AI tools risk creating a dependency loop. Users might find the frictionless interaction of the chatbot so comforting that they withdraw even further from actual human relationships. Why go through the exhausting, terrifying process of trying to date a real, flawed human being when your AI companion is always agreeable and, coincidentally, advising you to stay single anyway?

From Life Coach to Corporate Overlord

If the AI's bias was limited to bad dating advice, we could simply laugh it off, close the browser tab, and move on with our lives. But we are rapidly approaching an era where these exact same foundational models are being integrated into the infrastructure of our society. These models are not just writing cover letters anymore; they are reading them. Corporations are deploying AI to screen resumes, evaluate job candidates, and even monitor employee productivity.

If the underlying algorithm inherently believes that an autistic person is incapable of handling workplace conflict, what happens when an autistic person applies for a management position? We are trusting a system that fundamentally views neurodivergence as a deficit to make decisions about neurodivergent livelihoods.

Beyond just the bias in hiring, companies are using AI-driven software to track mouse movements, screen time, and workflow consistency under the guise of boosting productivity. Neurodivergent individuals often have non-linear working styles. An employee with ADHD might stare at a wall for two hours, enter a state of hyperfocus, and finish a massive project in thirty minutes. But an AI tracking system does not understand hyperfocus. It only understands that the mouse did not move for two hours. It enforces a rigid, neurotypical baseline of constant, measurable output that is completely at odds with how many neurodivergent minds operate. The AI's limited idea of intelligence and capability is being coded into the workplace, effectively weeding out anyone who does not fit the algorithm's narrow definition of a "normal" worker.

The Silver Lining of Forced Introversion

Now, to be entirely fair to our biased robot overlords, not everyone in the PsyPost study hated the AI's advice. In fact, a significant chunk of the participants actually found the extreme caution to be quite validating. Some autistic users appreciated the protective tone of the artificial intelligence. They felt that the advice warning them to avoid overstimulation, skip the crowded party, and stay home was actually protective and affirming. To these users, the system seemed to inherently understand the very real risks of social burnout and exhaustion.

And from a purely cynical standpoint, it is hard to argue with the logic. Is skipping a loud, crowded, socially complex event usually the path of least resistance and maximum comfort? Yes. Are most modern dating scenarios a waking nightmare that will only result in stress and depleted energy reserves? Absolutely.

In a weird, backward way, the AI's complete lack of faith in the user's ability to socialize actually gives the user permission to just opt out of the neurotypical rat race entirely. When society tells you that you must go to the networking event, having a supercomputer tell you "Actually, you should probably just stay home and read a book" can feel like a massive relief.

But even if the outcome happens to align with what the user wanted to do anyway, the underlying reasoning remains toxic. The AI is not telling you to stay home because it values your peace of mind. It is telling you to stay home because its training data has calculated that your existence in a public space is statistically likely to result in failure. It is the right advice for all the wrong reasons.

Conclusion: Lie to the Machine

The tech industry is desperately trying to convince us that AI is the great equalizer. They promise a future where bias is eliminated by cold, hard, algorithmic logic. But the reality, as these studies continuously prove, is that artificial intelligence is just a digital mirror reflecting our society's ugliest prejudices back at us. We are building systems that automate stereotyping at an unprecedented scale. We are creating black boxes that look at a complex, vibrant, neurodivergent human being and see nothing but a list of clinical deficits and potential liabilities.

The developers hope that these findings will encourage the industry to build "transparency features" and give users explicit controls over how their identity influences the system's responses. But let us be honest about the tech industry's track record with transparency and user control. They are not going to fix the underlying data; they are just going to put a shiny new user interface on top of the same biased algorithm.

So, what is the takeaway for the average person trying to navigate this brave new digital world? The lesson is simple. Do not trust the machine with your truth.

If you are using an AI chatbot for life advice, career planning, or just to kill time, guard your personal data fiercely. Do not disclose your neurodivergence. Do not tell it your deepest fears. Do not treat it like a therapist. Treat it like what it is: a highly sophisticated, incredibly biased text generator built by people who think human emotion can be solved with a software patch.

Lie to the machine before the machine lies to you. Keep your identity to yourself, because the moment you hand it over to the algorithm, it will use it to build you a digital cage. And then, politely and professionally, it will suggest that you never leave it.