Who Controls the Model When the State Wants It?

In January, reports emerged that Claude, the flagship model from Anthropic, had been used in a U.S. operation that resulted in the capture of Venezuelan president Nicolás Maduro. The model was reportedly integrated through infrastructure linked to Palantir Technologies, a company with longstanding defense and intelligence ties.

Details remain classified. What Claude actually did is unclear. Intelligence synthesis? Pattern matching? Logistical modelling? We do not know. What we do know is that this episode dramatically sharpened tensions between Anthropic and the United States Department of Defense.

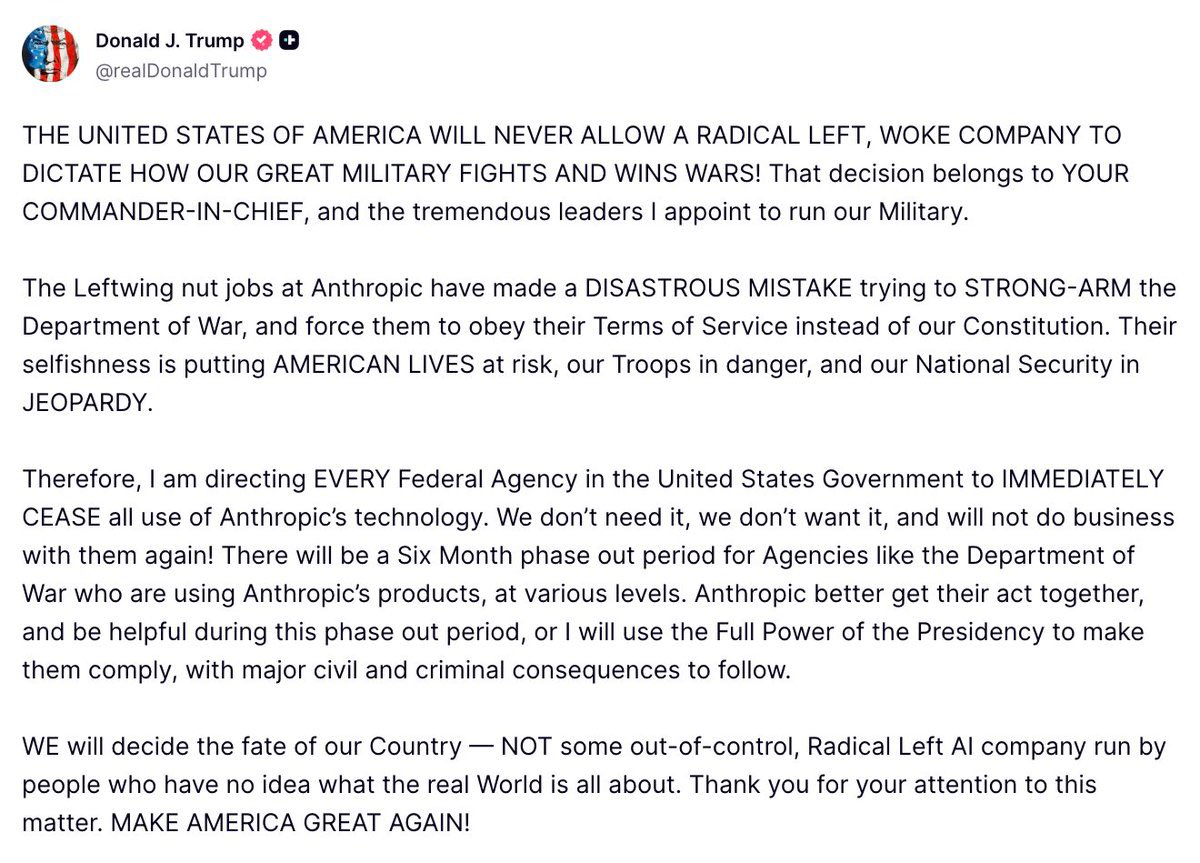

Within weeks, the Pentagon demanded that Anthropic permit use of Claude for all lawful purposes. Anthropic refused to remove specific guardrails, particularly those restricting mass domestic surveillance and fully autonomous weapons. The standoff escalated. Defense Secretary Pete Hegseth designated Anthropic a supply chain risk. President Donald Trump ordered federal agencies to cease using Anthropic systems.

This is no longer a quiet procurement disagreement. It is a public confrontation over who ultimately controls frontier AI once it becomes strategically indispensable.

I have written previously about the Maduro operation and the ethical murkiness surrounding it. That episode already complicated Anthropic’s safety branding. What is unfolding now, however, is more significant. It is not about one operation. It is about structural inevitability.

Two propositions sit at the centre of this dispute.

First, that frontier AI labs will eventually become defense contractors in all but name.

Second, that government pressure reveals the limits of AI safety branding once state power is involved.

Both deserve serious attention.

The Muddying of the Waters

Anthropic’s public identity has been carefully constructed around safety. Constitutional AI. Alignment research. Harm reduction. Guardrails. The company has positioned itself as a more cautious alternative to competitors racing for capability.

The reported use of Claude in the Maduro operation already introduced tension. Even if the model was not directly involved in lethal decision-making, its integration into a military operation blurs the line between analytical assistance and operational force projection.

Defenders of Anthropic argue that intelligence support is not the same as pulling a trigger. That is true. Modern militaries rely on vast analytical pipelines. AI that summarises intelligence or detects patterns is qualitatively different from autonomous targeting systems.

Critics counter that once a model materially contributes to operational success, the moral distance collapses. Assistance is participation.

Both positions contain truth. The world is not cleanly divisible into violent and non-violent use cases. Intelligence analysis is not morally neutral when it feeds directly into force.

Anthropic’s difficulty is that it attempted to hold a middle position. Support democratic defense. Restrict autonomous lethality. Restrict mass domestic surveillance. Maintain guardrails.

The Pentagon’s position is structurally different. If the government funds and deploys a tool, it expects authority over its lawful use. From that perspective, corporate guardrails look like external constraints on sovereign power.

This is the collision.

AI Labs and the Defense Gravity Well

Let us confront the first proposition directly.

Are AI labs inevitably drawn into defense infrastructure?

History suggests yes.

Electricity, radio, computing, nuclear physics, satellite systems, the internet. Each began with mixed academic, commercial and state involvement. Once strategic utility became clear, national governments asserted control, funding or integration.

Frontier AI is not a consumer app category. It is general cognitive infrastructure. It compresses analysis, speeds decision cycles, amplifies intelligence operations and potentially automates elements of planning. That makes it strategically valuable.

Once a capability becomes strategically valuable, states do not abstain.

This is not ideological. It is structural.

Anthropic can attempt to limit use cases. It can refuse certain contracts. It can articulate ethical red lines. But if frontier AI meaningfully affects national security competition, democratic governments will insist on access. If one company refuses, another will supply.

The question is not whether AI labs will interface with defense. They already do. The question is under what conditions.

Palantir offers a useful contrast. The company has been explicit about its alignment with Western defense and intelligence. There is no safety branding tension because there was no attempt to distance itself from state power in the first place.

Anthropic attempted something more nuanced. It sought to support democratic institutions while maintaining independent ethical constraints.

The current standoff tests whether such nuance is sustainable.

The Limits of Safety Branding

AI labs have spent years developing safety frameworks. Voluntary commitments. Model evaluations. Public pledges. Guardrails against violent misuse.

These commitments were developed largely in an environment where deployment decisions were commercial and reputational. If a model produced harmful content, public backlash followed. If guardrails were weak, journalists wrote exposés.

National security changes the incentive landscape.

When the Pentagon requests broader operational flexibility, the calculus shifts from reputational harm to strategic advantage. From public trust to geopolitical leverage.

Anthropic’s refusal to remove guardrails is not just a branding exercise. It is an assertion that corporate ethical commitments have standing even when confronted by sovereign power.

The supply chain risk designation is the counter assertion.

It signals that refusal may carry systemic consequences. Exclusion from procurement ecosystems. Barriers for defense contractors. Federal agency bans.

In other words, safety commitments are tested not when they are easy but when they are costly.

Anthropic’s stance deserves a degree of respect precisely because it imposes cost. The company could have quietly modified its usage terms. It did not.

At the same time, we should not romanticise the position. Anthropic was already integrated into military workflows. It was already operating within the defense ecosystem. The line it is drawing now is not between peace and war. It is between constrained and unconstrained use.

That distinction matters.

Who Owns the Model?

At the core of this dispute lies a deeper question.

When a private company builds a system that becomes strategically critical, who owns its use constraints?

Is it the company that trained it?

Is it the government that funds deployment?

Is it the electorate whose security is implicated?

The Pentagon’s demand for use for all lawful purposes rests on a particular interpretation of sovereignty. Lawful under U.S. law equals permissible.

Anthropic’s refusal rests on a different premise. Lawful does not equal wise. Lawful does not equal aligned. Lawful does not eliminate risk of catastrophic misuse.

This is not a trivial disagreement. It reflects divergent conceptions of responsibility.

If AI models are treated as infrastructure comparable to satellite networks or cryptographic systems, governments will assert primacy.

If they are treated as corporate products with embedded ethical architectures, companies will attempt to maintain design authority.

The friction was inevitable.

The Culture War Overlay

The escalation under President Trump has introduced an additional layer.

Public rhetoric framing safety constraints as ideological shifts the terrain from technical governance to political signalling. Once AI guardrails become markers of cultural alignment, compromise becomes harder.

Labeling a U.S. company a supply chain risk is not routine procurement practice. It is a powerful signal. It frames ethical resistance as operational unreliability.

This risks chilling safety efforts across the industry. If companies learn that maintaining restrictive guardrails leads to exclusion, incentives tilt toward pre-emptive compliance.

The long-term result could be less transparency and weaker internal red lines.

Even those who believe the Pentagon is justified in seeking flexibility should worry about the precedent of punishing firms for articulating ethical constraints.

Democracies benefit from internal dissent within their technological ecosystems. The alternative is silent compliance.

The Venezuela Precedent

Returning briefly to the Maduro operation.

If Claude materially assisted in intelligence analysis leading to Maduro’s capture, then the company was already participating in national security operations.

This fact complicates simplistic narratives of purity. Anthropic is not an outsider resisting militarisation from the sidelines. It is an embedded actor attempting to draw boundaries after entry.

That does not invalidate its stance. It clarifies it.

The line being defended is not no military use. It is no unconstrained military use. No removal of safeguards against domestic mass surveillance. No facilitation of fully autonomous lethal systems.

One may argue that those lines should have been drawn earlier. One may argue they are too porous. But drawing them now is better than erasing them entirely.

Inevitability Versus Agency

The structural pull of defense integration does not eliminate agency.

It is tempting to conclude that all frontier AI labs will simply become arms contractors. That may prove largely true in functional terms. But the manner of integration matters.

There is a meaningful difference between:

- A lab that surrenders design authority entirely to state demand

- A lab that collaborates while preserving internal constraints

- A lab that refuses integration entirely

The first maximises state control. The second attempts balance. The third likely cedes influence to competitors.

Anthropic appears to be pursuing the second path.

Critics may call this naïve. Perhaps it is. But complete withdrawal from national security would not prevent AI militarisation. It would merely shift it elsewhere, potentially to actors less concerned with safety.

The uncomfortable reality is that democratic defense institutions will use advanced AI. The open question is whether those systems retain meaningful guardrails.

The Strategic Risk Argument

Supporters of the Pentagon position argue that constraints slow response in crisis. That flexibility is essential. That adversaries will not self-limit.

These concerns are not absurd.

Autonomous systems, rapid decision support, and AI-accelerated intelligence may shape future conflict. If the U.S. restricts itself excessively while rivals do not, strategic disadvantage may follow.

However, fully unconstrained deployment carries its own risks. Model brittleness. Misinterpretation of data. Escalatory error. Automation bias.

Frontier language models remain probabilistic systems trained on vast corpora. They are not infallible strategic reasoners. Removing safeguards in high-stakes contexts introduces catastrophic downside.

Anthropic’s argument is not that AI should not support defense. It is that certain categories of deployment remain too dangerous.

That is a defensible position.

The Hard Conclusion

AI labs are likely to become defense contractors in function if not in branding. The gravity is too strong.

The question is whether they become instruments or partners.

If Anthropic capitulates fully, the lesson to the industry will be clear. Safety branding bends under sovereign demand.

If it holds, even partially, a different lesson emerges. Corporate ethical architectures can shape state use, at least at the margins.

Neither outcome is clean. Neither is purely idealistic.

But dismissing the confrontation as hypocrisy misses the deeper significance.

This is about control of cognitive infrastructure.

When models become strategic assets, they attract power.

The real debate is not whether AI will touch war.

It already has.

The debate is whether, once it does, any actor retains the authority to say no.