AI as a Cognitive Crutch: How Over-Reliance on Chatbots Weakens Student Memory and Learning

AI chatbots promise instant answers and effortless productivity, but at what cost to student learning? New research reveals how over-reliance on these tools can weaken memory, erode critical thinking, and create an illusion of understanding, leaving students unprepared when the tech is taken away.

The Convenience Trap

In early 2026, a study by André Barcaui at the Federal University of Rio de Janeiro made waves in education circles. Students who used AI chatbots to study scored 11 percentage points lower on retention tests than peers who used traditional methods. The AI-assisted students felt more confident in their knowledge, but their test results told a different story.

Barcaui’s findings highlight a growing concern: while AI tools like ChatGPT can make learning feel easier and faster, they often undermine the cognitive processes that lead to deep, durable understanding. Psychologists call this "cognitive offloading"—the tendency to delegate mental work to external tools. When students outsource thinking to AI, they miss out on the struggle that strengthens memory and builds expertise.

This isn’t about banning technology. It’s about recognizing that the design of AI tools can interfere with how we learn. When students rely on chatbots for explanations, essay writing, or problem-solving, they bypass the "desirable difficulties" that make learning stick. The result is a generation of students who may perform well on assignments but struggle to retain knowledge, think critically, or apply concepts independently.

For educators, the challenge is clear: How can we use AI to enhance learning without sacrificing the cognitive effort that makes it meaningful?

The Science of Memory: Why Struggle Matters

The Role of Retrieval Practice

Memory isn’t passive. It strengthens when we actively retrieve information—whether by recalling facts, explaining concepts, or solving problems. This process, called retrieval practice, is one of the most effective learning strategies identified by cognitive science.

AI chatbots disrupt this process. Instead of grappling with difficult material, students can ask a bot for instant answers. The immediate result looks impressive, but the long-term cost is steep. In Barcaui’s experiment, students who used ChatGPT spent 45 percent less time on assignments than those who studied traditionally. Yet when tested 45 days later, the traditional learners outperformed their AI-assisted peers by a wide margin. The difference wasn’t just in scores, it was in the quality of understanding. Traditional learners could explain concepts in their own words and apply knowledge to new scenarios, while the AI group struggled to recall basic definitions.

"Productivity does not replace competence. There is an abysmal difference between delivering a piece of work and understanding the process of its creation."

—André Barcaui, ChatGPT as a cognitive crutch: Evidence from a randomized controlled trial on knowledge retention

The Illusion of Competence

One of the most insidious effects of AI is the illusion of competence it creates. When a chatbot generates a polished essay or solution, students naturally assume they’ve mastered the material. But as Barcaui’s retention test revealed, this confidence is often misplaced.

This phenomenon isn’t new. Researchers have documented how calculators can erode mental math skills and GPS can weaken spatial memory. AI takes this further by handling higher-order thinking tasks like analysis, synthesis, and even creativity.

A 2026 report from the University of Technology Sydney calls this the "performance paradox": AI can boost a student’s performance on an immediate task while diminishing the durable learning that is the ultimate goal of education. The report warns that this effect is especially pronounced for novice learners who are still building foundational knowledge and skills.

For example:

- A student who uses AI to generate an essay on the causes of World War I may produce a passable paper without ever grappling with the complex interplay of nationalism, militarism, and alliances.

- A math student who relies on an AI tutor to solve equations may ace homework but freeze on an exam that requires explaining their reasoning.

- A biology student who asks a chatbot to summarize cell division may memorize the steps of mitosis without understanding why they matter.

Cognitive Load Theory: Why AI Can Overwhelm or Underwhelm

Cognitive Load Theory explains why AI’s impact varies so widely. Our working memory has limited capacity, and learning happens when we encode new information through effortful processing. AI can tip the balance in two ways:

- Overload: Poorly designed AI tools can bombard students with information, leaving them passive recipients rather than active learners.

- Underload: When AI does the work for students, it removes the cognitive friction that drives learning. The OECD calls this "metacognitive laziness"—the disengagement that happens when students offload too much thinking to a machine.

The best uses of AI in education don’t replace cognitive effort, they scaffold it.

The Evidence: What Research Tells Us

Study 1: Barcaui’s Randomized Controlled Trial (2026)

Method:

- 120 undergraduate business students were randomly assigned to one of two groups.

- AI group: Used ChatGPT to research and prepare a presentation on AI ethics, societal impacts, and technical foundations.

- Traditional group: Used course notes, academic databases, and standard internet search (but no AI chatbots).

- Both groups spent up to two weeks on the assignment, then delivered presentations to peers.

- 45 days later, participants took a surprise 20-question multiple-choice test.

Results:

| Metric | AI Group | Traditional Group |

|---|---|---|

| Avg. study time | 3.2 hours | 5.8 hours |

| Retention test score | 57.5 percent correct | 68.5 percent correct |

| Confidence in knowledge | High | Moderate |

Key Findings:

The AI group’s presentations were indistinguishable from the traditional group’s in quality. But on the retention test, the traditional group outperformed the AI group by 11 percentage points. The gap was widest for technical topics, suggesting that AI hinders learning of complex material most.

Study 2: UTS Network for Quality Digital Education (2026)

This landmark report identified two types of cognitive offloading:

- Beneficial offloading: Using AI for low-stakes tasks like grammar checks or citation formatting.

- Detrimental offloading: Using AI to do the intrinsic work of learning, such as planning, generating ideas, or writing whole answers.

The report’s authors warn that detrimental offloading is most risky for younger students, disadvantaged students, and novice learners who haven’t yet built the "thinking infrastructure" needed to evaluate AI’s output.

"Students who already possess high levels of domain knowledge and strong metacognitive skills will be able to leverage AI for beneficial offloading and accelerate their learning. Students without these skills will be susceptible to detrimental offloading and bypassing the very learning they need."

—Artificial intelligence, cognitive offloading and implications for education

Study 3: AI and Critical Thinking (Zhai et al., 2025)

A study published in Societies found that students who heavily relied on AI dialogue systems showed diminished decision-making abilities, weaker critical analysis skills, and reduced creativity. The researchers attributed these effects to a feedback loop: the more students offloaded thinking to AI, the less they exercised their own critical faculties—and the more they came to depend on the tool.

Study 4: The OECD Digital Education Outlook (2026)

The OECD’s 2026 report analyzed data from 40 countries and found that 95 percent of students reported using AI tools for schoolwork. The most common uses were explaining concepts, summarizing articles, and structuring thoughts. However, students who used AI for high-stakes tasks performed worse on standardized tests than peers who used AI only for low-stakes help.

The report concludes that AI’s benefits depend entirely on how it’s used:

"Generative AI should be used selectively and purposefully for pedagogical reasons—to enrich learning, not replace cognitive effort or weaken the human relationships at the heart of education."

Classroom Implications: How Should Educators Respond?

1. Teach AI Literacy as a Core Skill

AI isn’t going away. The goal isn’t to ban it, but to teach students how to use it responsibly. This means:

- Evaluating AI output: Can students spot errors, biases, or gaps in an AI-generated response?

- Asking better questions: Instead of prompting "Write me an essay on photosynthesis," teach them to ask, "What are the key debates about the efficiency of photosynthesis in different plant types?"

- Using AI as a sparring partner: Tools that act as "novices" force students to explain concepts in their own words, revealing their true understanding.

Classroom Activity:

- AI Fact-Checking Drill: Give students an AI-generated paragraph on a topic they’ve studied. Ask them to identify inaccuracies, missing context, or logical flaws.

- Prompt Engineering Workshop: Teach students to craft prompts that require them to do the heavy lifting.

2. Design Assignments That Resist Offloading

To encourage deep thinking:

- Emphasize process over product: Require drafts, annotations, or oral explanations of how students arrived at their answers.

- Use "AI-proof" assessments: Open-ended questions, in-class debates, or projects that require personal reflection or local context.

- Leverage AI for scaffolding: Have students use AI to generate retrieval practice questions or counterarguments to their own thesis.

Example:

Instead of: "Write a report on climate change."

Try: "Use AI to find three conflicting perspectives on climate policy. Then, write a 2-page response defending your own position—citing which sources you trust and why."

3. Make Struggle Visible (and Valuable)

Students often see struggle as a sign of failure. Reframing it as a necessary part of learning can counteract AI’s illusion of ease.

- Normalize mistakes: Share examples of famous scientists, writers, or mathematicians who grappled with difficulties.

- Use "productive failure" tasks: Give students problems slightly beyond their current ability, then debunk the solutions as a class.

- Model metacognition: Think aloud as you solve a problem, highlighting where you get stuck and how you recover.

4. Prioritize Human Interaction

AI can’t replace the social dimension of learning. Studies show that peer discussion, teacher feedback, and collaborative problem-solving lead to deeper understanding than solo AI use.

Strategies:

- Peer teaching: Assign students to explain a concept to a classmate before consulting AI.

- Socratic seminars: Use AI to generate provocative questions, then discuss them as a class.

- Teacher check-ins: Require one-on-one conferences to discuss progress and misunderstandings.

The Equity Issue: Who Benefits (and Who Doesn’t)?

AI’s cognitive risks aren’t distributed equally. The UTS report highlights a digital divide in how students use these tools:

- Advantaged students with strong prior knowledge and metacognitive skills use AI to accelerate learning.

- Disadvantaged students with weaker foundations use AI to compensate, often in ways that deepen their gaps.

How to Close the Gap:

- Provide targeted support for students who struggle with self-regulated learning.

- Ensure all students know how to use AI effectively, not just that they can.

- Balance tech-rich and tech-free tasks.

Looking Ahead: The Future of AI in Education

The Case for Optimism

When used intentionally, AI can:

- Personalize learning through adaptive quizzes.

- Free up teacher time by automating grading for low-stakes assignments.

- Spark curiosity by simulating historical events or scientific phenomena.

The key is pedagogy-first design. As the Chronicle of Higher Education puts it:

"The future of AI in education will be decided less by technology and more by how we design learning around it."

Policy Recommendations

Experts urge schools and governments to:

- Adopt national standards for AI use in education.

- Invest in teacher training to help educators integrate AI without sacrificing rigor.

- Fund research on long-term cognitive effects, especially for young learners.

Practical Takeaways for Teachers

- Use AI to generate retrieval practice questions

- Teach AI literacy alongside digital literacy

- Design assignments that require personal reflection or local context

- Model critical thinking when evaluating AI output

- Encourage productive struggle before turning to AI

AI as a Tool, Not a Crutch

André Barcaui’s study isn’t a call to reject AI, it’s a wake-up call to use it wisely. The goal isn’t to make learning harder for its own sake, but to ensure that technology serves human development.

As educators, our challenge is to help students navigate a world where AI is everywhere, but deep thinking is still rare. That means:

- Valuing effort over ease.

- Prioritizing understanding over output.

- Teaching students to think with AI, not for them.

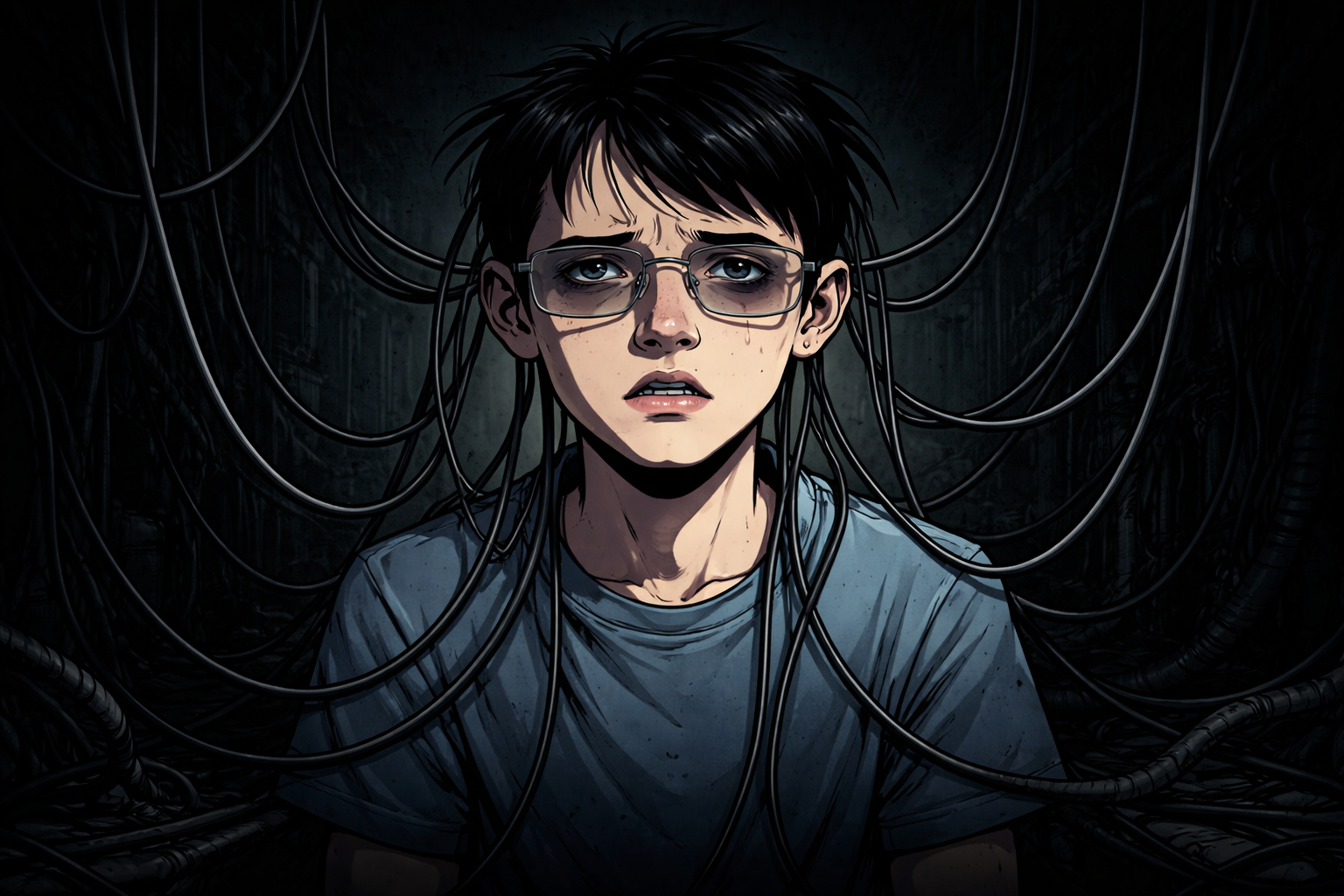

The crossroads illustrated above isn’t just a metaphor. It’s a choice every student and every teacher faces daily. The path we take will shape not just test scores, but the kind of thinkers our students become.

Further Reading:

- Barcaui, A. (2026). ChatGPT as a cognitive crutch: Evidence from a randomized controlled trial on knowledge retention.

- UTS. (2026). Artificial intelligence, cognitive offloading and implications for education.

- OECD. (2026). Digital Education Outlook.

- Zhai, X. et al. (2025). AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking.